AI Posture Analysis: How Accurate Is Computer Vision for Posture?

Key Takeaways

- Phone-based posture analysis now lands within a few degrees of what a clinician measures from the side. Useful for screening, not for diagnosis.

- A single camera sees forward head posture and a rounded upper back clearly. It cannot tell if your hips are twisting, because it has no depth.

- How you take the photo matters as much as the app. Same distance, same camera height, same clothing, every time.

- The gap between a phone app and a motion-capture lab has roughly halved in the past few years, and it keeps shrinking.

AI-powered posture analysis uses computer vision to detect body landmarks from photos or video, calculate joint angles, and identify postural deviations. The technology exists in smartphone apps, webcam-based desktop tools, gym equipment, and clinical screening systems. The accuracy question matters because people are making health decisions based on what these systems tell them. I build one of these systems, so I have spent a lot of time staring at the gap between what the technology can detect and what it misses.

How Pose Estimation Works

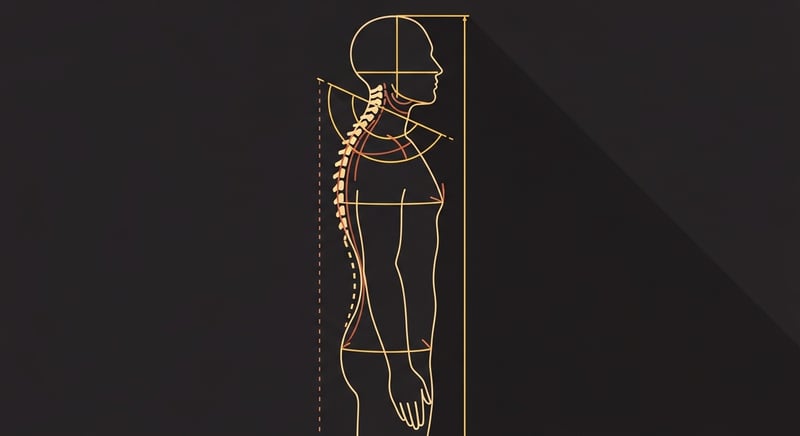

Pose estimation is a computer vision task where an algorithm identifies specific body landmarks (joints, extremities, key anatomical points) in an image or video frame. Most modern systems detect 17 to 33 landmarks depending on the framework, covering the major joints from ankle to ear. For posture analysis, the landmarks that matter most are ear, shoulder, hip, knee, and ankle in the sagittal (side) plane, plus shoulder height symmetry and lateral spine alignment in the coronal (front) plane.

The underlying technology is a convolutional neural network trained on hundreds of thousands of annotated images of human bodies. During training, human annotators mark the exact pixel coordinates of each landmark on every training image. The network learns to predict those coordinates from novel images it has never seen. The result is a model that can take a photo of a person and output a skeleton of connected points overlaid on the body.

From those points, posture analysis becomes geometry. If you know the pixel coordinates of the ear, shoulder, and hip, you can calculate the angle between them. That angle corresponds to the craniovertebral angle, the primary clinical measure for forward head posture. A perfectly aligned head has a craniovertebral angle close to 0 degrees (ear directly over shoulder). A forward head posture of 15 degrees means the ear sits well in front of the shoulder line.

The accuracy question comes down to two things: how precisely the algorithm places each landmark, and how reliably the geometry holds up across different camera setups, body types, and conditions. For a broader perspective on how posture research uses these measurements, see our posture science overview.

The Major Frameworks Compared

Three frameworks dominate pose estimation in 2025. Each has a different architecture and set of tradeoffs.

OpenPose was the first real-time multi-person pose estimation system, published by Carnegie Mellon in 2017. It detects 25 body keypoints (with optional hand and face detection pushing the total to 135). OpenPose runs on GPU and is primarily used in research and desktop applications. It set the standard that everything else is measured against.1

Google MediaPipe is the cross-platform option. MediaPipe Pose detects 33 landmarks, including foot and hand detail that OpenPose's body model does not cover. It runs on mobile devices, browsers, and desktop with good performance. The landmark detection is optimized for single-person use cases, which makes it well-suited for posture assessment where you are analyzing one person at a time.2

Apple Vision is what UpWise uses. Apple introduced human body pose detection in the Vision framework starting with iOS 14, with 3D pose estimation added in iOS 17. Vision detects 19 body joints using on-device Neural Engine processing. The advantage of Vision over MediaPipe for iOS development is that it runs natively on Apple hardware with optimized Metal compute, which means lower latency and better battery life. The disadvantage is that it only works on Apple devices.3

"I chose Apple's Vision framework for UpWise because it runs entirely on-device. No photos leave the phone. For a health app that asks users to photograph their body, privacy is not a feature. It is a prerequisite."

All three frameworks can identify the major body landmarks with sufficient accuracy for general posture screening. The differences are in edge-case performance (unusual body positions, occlusion from clothing, extreme lighting), processing speed, and platform availability. For the specific task of analyzing a standing side-profile posture photo, all three produce comparable results.

2D vs 3D: What You Gain and What You Lose

The biggest technical limitation in consumer posture analysis is the difference between 2D and 3D pose estimation. Most smartphone posture apps work in 2D: they take a photo and detect landmarks in the image plane. This works for assessments where the relevant angles are visible in the camera's view. Side-profile shots show forward head posture. Front-view shots show lateral shifts and shoulder asymmetry.

But a 2D camera cannot see depth. If someone has a rotational asymmetry in their thoracic spine (one side of the ribcage is further forward than the other), a front-view 2D photo will not detect it. The rotation happens along the axis pointing toward the camera, which is invisible in a flat image. This is a real limitation for posture analysis because rotational deviations are common, especially in people with scoliosis or habitual one-sided postures.

3D pose estimation addresses this by reconstructing the body in three dimensions. Apple's Vision framework does this by using a machine learning model trained on 3D motion capture data to infer depth from a single 2D image. It is not as accurate as actual depth cameras (like the LiDAR sensor on Pro iPhones) or multi-camera setups, but it can detect major rotational deviations that pure 2D analysis misses.

UpWise uses both modes. The primary posture score is calculated from 2D landmark positions because those are the most reliable measurements. The 3D pose data is used as a secondary screen for rotational issues. If the 3D analysis detects significant asymmetry in the depth axis, the app flags it. But we weight that signal lower than the 2D measurements because the depth inference has higher error margins.

For a comparison of how camera-based analysis stacks up against dedicated hardware, our article on wearable posture technology covers the sensor-based alternatives.

What Validation Studies Show

The most relevant validation studies compare AI pose estimation measurements against clinical gold standards: either manual goniometry (a physiotherapist measuring angles with a handheld protractor) or marker-based motion capture (reflective markers tracked by infrared cameras in a lab).

A 2022 systematic review in Sensors evaluated the accuracy of markerless pose estimation for clinical gait and posture analysis across 28 studies. For sagittal plane measurements (the side-view angles most relevant to posture), the pooled results showed a mean absolute error of 3.2 to 5.8 degrees compared to marker-based motion capture, depending on the joint and the framework used.4 That error range is clinically significant for diagnostic purposes (where a 2-degree difference can change a classification) but acceptable for screening and longitudinal tracking.

A 2023 study specifically validating smartphone-based posture assessment against clinical photography found good to excellent intraclass correlation coefficients (ICC 0.78 to 0.92) for craniovertebral angle, thoracic kyphosis angle, and lateral head tilt.5 The agreement was strongest for craniovertebral angle and weakest for thoracic kyphosis, which makes sense because kyphosis involves a curved region of the spine that is harder to reduce to a single angular measurement.

"A 3 to 5 degree error margin means the technology can reliably tell you whether your head sits 15 degrees forward or 5 degrees forward. It cannot tell you whether it is 12 degrees or 14 degrees. For daily tracking, that resolution is plenty."

What these validation numbers mean practically is that AI posture analysis is accurate enough to detect the kind of deviations that matter for everyday posture improvement. If your head is significantly forward, the system will see it. If your shoulders are notably uneven, it will flag it. Where it falls short is in the fine-grained measurements that a clinical setting requires, things like measuring the exact Cobb angle of a scoliotic curve or detecting a 3-degree pelvic tilt.

Test-retest reliability is another important consideration. A 2021 study found that repeated measurements of the same person using the same smartphone app and camera position produced results within 1.5 degrees of each other.6 That internal consistency matters for tracking change over time. If you measure your posture every day and the app says your craniovertebral angle improved by 4 degrees over 6 weeks, you can trust that the change is real and not just measurement noise. The broader evidence on posture app effectiveness depends on this kind of reliability.

Practical Limitations That Affect Every System

Paper accuracy and real-world accuracy are different things. The validation studies described above were conducted under controlled conditions: consistent lighting, standardized camera placement, subjects in fitted clothing. Real users take posture photos in bedrooms with uneven lighting, wearing baggy sweatshirts, holding the phone at random angles. Every one of those variables introduces error.

Camera angle matters more than people expect. A phone angled 10 degrees upward versus 10 degrees downward will produce measurably different craniovertebral angle readings from the same person standing in the same position. In UpWise, I addressed this by adding a leveling guide that uses the phone's accelerometer to confirm the camera is level before capturing the posture photo. It adds a step, but it eliminates a major source of measurement variability.

Distance from the camera changes perspective geometry. A photo taken from 3 feet away will show different proportional relationships between body landmarks than one taken from 10 feet away, due to lens perspective distortion. Smartphone lenses (typically 26mm equivalent focal length) produce noticeable barrel distortion at close range that stretches landmarks at the edges of the frame. Keeping the subject centered and at a consistent distance (6-8 feet works well) minimizes this effect.

Clothing is a detection challenge. Pose estimation algorithms are trained on images where body landmarks are visible through fitted clothing or exposed skin. Loose or layered clothing shifts the apparent position of joints, particularly shoulders and hips. A heavy winter coat can add 2-3 inches to apparent shoulder width and move the detected shoulder landmark away from the actual joint center. Clinical studies typically control for this by having subjects wear fitted clothing, but consumer apps cannot enforce a dress code.

Body type affects landmark detection confidence. Most pose estimation models are trained disproportionately on images of average-build adults. Detection accuracy drops for people at the extremes of body size (very thin, very heavy), for children, and for pregnant women. This is a training data bias problem, not a fundamental algorithmic limitation, and it is improving as training datasets become more diverse. But for now, if you are outside the "average" body type that dominates training sets, you may see more inconsistent results.

How It Compares to Clinical Assessment

Clinical posture assessment uses one of three methods depending on the setting: visual observation by a trained clinician, manual goniometry (measuring joint angles with a protractor-like tool placed on the body), or marker-based motion capture in a lab. Each has its own accuracy profile, and it is worth understanding where AI fits in the spectrum.

Visual observation by a clinician, which is what most people experience in a physical therapy or chiropractic setting, is surprisingly subjective. A 2019 study measuring inter-rater reliability of visual posture assessment found only moderate agreement between trained physiotherapists rating the same patients (kappa values of 0.40 to 0.65).7 That means two experienced clinicians looking at the same person often disagree on the severity of postural deviations. AI pose estimation, for all its limitations, produces consistent numbers. It will give you the same reading every time for the same input, which visual assessment cannot guarantee.

"Two experienced physiotherapists looking at the same patient often disagree on posture severity. AI gives a consistent number every time. Consistency may matter more than absolute precision for tracking improvement."

Manual goniometry is more precise than visual observation, with reported inter-rater reliability ICC values of 0.80 to 0.95 for most joint angles. But it requires physical contact, a trained clinician, and 15-30 minutes per assessment. AI pose estimation from a single photo takes seconds and requires no clinical expertise from the user. The accuracy gap between goniometry and AI is about 3-5 degrees, as the validation studies show. Whether that gap matters depends on the use case.

Marker-based motion capture is the gold standard with sub-degree accuracy and 3D reconstruction. It is also confined to research labs, costs upward of $50,000 per setup, and requires subjects to wear reflective markers taped to their skin. It is irrelevant for daily consumer use. The at-home posture assessment guide on our blog works within the accuracy range that smartphone analysis provides.

The right framing is not "AI versus clinical assessment" but "what level of accuracy does daily posture tracking require?" If the answer is "enough to detect major deviations and track whether they are improving or worsening," then smartphone pose estimation passes that bar. If the answer is "enough to diagnose a specific spinal condition and determine its severity to guide medical treatment," then it does not. Posture correctors operate in a similar middle ground between clinical tools and consumer products.

What I Have Learned Building UpWise's Posture Engine

I have spent the better part of three years working with Apple's Vision framework to build UpWise's posture analysis system. Some things I have learned only through building, not from reading papers.

The first is that the raw landmark detection is not the hard part. Apple's Vision framework places landmarks on a standing person with enough accuracy for posture scoring. The hard part is everything around the detection: deciding what to measure, how to weight different deviations, how to account for individual variation, and how to present the results in a way that is both accurate and actionable.

For example, UpWise measures craniovertebral angle, shoulder alignment, lateral trunk shift, and hip alignment from each posture photo. These measurements go through what I call the ergonomics engine, a scoring system that weights each measurement based on its clinical significance and the user's history. A 10-degree forward head posture contributes more to the overall posture score than a 5-degree lateral shift, because the clinical consequences are different and the research evidence is stronger.

The second lesson is that consistency across measurements matters more than absolute accuracy on any single measurement. If UpWise says your craniovertebral angle is 12 degrees today and 10 degrees next week, the 2-degree improvement is real and meaningful. Whether the "true" clinical value was 13 degrees instead of 12 is less important than the fact that the system can reliably detect a 2-degree change. This is why standardizing the photo setup (same position, same distance, level camera) is so important.

The third lesson is that 2D analysis is enough for the vast majority of users. I implemented 3D pose detection as a secondary screen, and it adds value for users with rotational asymmetries. But for the primary use case of tracking forward head posture, shoulder rounding, and lateral shift over time, the 2D sagittal and coronal measurements are sufficient and more reliable than the inferred 3D coordinates.

The fourth is that users do not care about degrees. They care about trends. Nobody opens a posture app and thinks "I need to reduce my craniovertebral angle by 3 degrees." They think "is my posture getting better?" The job of the posture engine is to translate angular measurements into something a person can act on: a score that goes up when things improve, specific areas to focus on, and exercises that target their weakest points.

Where the Technology Is Heading

Pose estimation accuracy has improved substantially since the first consumer frameworks launched around 2018. Benchmark comparisons show that mean landmark detection error has dropped by 40 to 60% across the major frameworks between 2018 and 2024.8 The gains come from larger training datasets, more efficient neural network architectures, and hardware improvements (Apple's Neural Engine, Google's Tensor chip) that allow more complex models to run on-device in real time.

Three specific developments are worth watching. First, temporal pose analysis: instead of analyzing single frames, newer systems track body landmarks across video sequences and use temporal smoothing to produce more stable measurements. This reduces the frame-to-frame jitter that affects single-frame pose estimation and enables real-time posture monitoring during activities like working at a desk.

Second, personalized pose models. Current frameworks use a one-size-fits-all detection model. Research is underway on systems that fine-tune the pose model to an individual's body proportions after a calibration period. This could reduce the body-type bias problem and improve accuracy for people outside the training data's demographic center.

Third, integration with other sensor data. iPhones already have accelerometers, gyroscopes, barometers, and (on Pro models) LiDAR. Fusing camera-based pose estimation with accelerometer data about phone orientation and LiDAR depth measurements could produce posture assessments that approach clinical accuracy without clinical equipment. We are not there yet, but the hardware pieces are already in the phone.

The trajectory is clear: consumer pose estimation is getting more accurate, more accessible, and more contextual. The gap between a phone-based posture check and a clinical motion capture session is narrowing, and it will continue to narrow. The clinical value of that improvement depends on whether app developers build responsible products that communicate accuracy limitations honestly and refer users to professionals when the measurements suggest something beyond a daily posture check.

Frequently Asked Questions

How accurate is AI posture analysis compared to clinical assessment?

Validation studies show that 2D pose estimation frameworks achieve angular measurements within 3 to 5 degrees of clinical goniometry for sagittal plane assessments like forward head posture and thoracic kyphosis. This is accurate enough for screening and tracking changes over time, but not sufficient for clinical diagnosis. Clinical motion capture systems with reflective markers remain the gold standard, with sub-degree accuracy.

What is the difference between 2D and 3D pose estimation for posture?

2D pose estimation detects body landmarks from a single camera view and can measure angles in the visible plane, making it suitable for side-profile assessments of forward head posture and kyphosis. 3D pose estimation reconstructs the body in three dimensions, allowing measurement of rotational deviations and depth. 3D is more comprehensive but requires either multiple cameras or specialized frameworks like Apple Vision that infer depth from a single camera using machine learning models trained on 3D data.

Can a smartphone camera detect posture problems?

Yes, for common deviations. Modern smartphone pose estimation can reliably detect forward head posture, excessive thoracic kyphosis, lateral shifts, and uneven shoulder height from a single photo. It struggles with rotational asymmetries, subtle pelvic tilt in the frontal plane, and any deviation that requires depth perception from a 2D image. For most people checking their daily posture, a smartphone provides enough accuracy to identify the main issues and track improvement.

What frameworks are used for AI posture analysis?

The most widely used frameworks are Apple Vision (for iOS apps), Google MediaPipe (cross-platform), and OpenPose (research and desktop applications). Apple Vision provides both 2D and 3D human body pose detection natively on iOS with optimized performance on the Neural Engine. MediaPipe offers 33 body landmarks with good accuracy across platforms. OpenPose was the pioneering framework and remains the academic reference standard.

What are the main limitations of AI posture analysis?

The four main limitations are: depth perception from a single 2D camera (cannot see rotational deviations), sensitivity to camera angle and distance (results change if the phone is tilted), clothing and body type affecting landmark detection accuracy, and the absence of clinical context (an AI system can measure angles but cannot diagnose conditions like scoliosis or disc herniation that require imaging).

Is AI posture analysis improving?

Yes, measurably. Benchmark studies show a 40-60% reduction in mean landmark detection error between 2018 and 2024 frameworks. Apple Vision's 3D pose estimation, introduced with iOS 17, added depth inference from a single camera using Neural Engine processing. The accuracy gap between consumer pose estimation and clinical motion capture is shrinking with each framework update.